Jaan Aru is a doctoral student at the Max Planck Institute for Brain Research in Frankfurt and a researcher at the Talis Bachmann Lab in Estonia, where he investigates how the neural machinery of the brain produces conscious experience. See more about Jaan’s research interests.

Jaan Aru is a doctoral student at the Max Planck Institute for Brain Research in Frankfurt and a researcher at the Talis Bachmann Lab in Estonia, where he investigates how the neural machinery of the brain produces conscious experience. See more about Jaan’s research interests.

When I open your skull, I see neurons and the web of their connections. I can measure fluctuating membrane potentials, neurons firing, neurotransmitters being released to the synaptic cleft, ion channels opening, etc. You are a machine – a very complicated machine, but nevertheless a machine.

Yet from the first-person perspective, from the inside, you do not feel like a machine. It feels like something to be you, to be afraid, to feel joy. You have consciousness. How do these two perspectives – the machine and the subjective experience – fit together? How does the neuronal machinery create consciousness of oneself and the surrounding world? Our current laws of nature give no explanation for the question as to how matter could become mind. Although consciousness is “everything we have and everything we are”, we do not know how it is produced by the neurobiological processes in the brain.

The problem of consciousness is not only a problem for one graduating neuroscientist; it has been acknowledged to be one of the toughest challenges for modern science by many esteemed researchers. To express it in the words of Erwin Schrödinger, a Nobel Prize winner and one of the founders of quantum mechanics:

“The world is a construct of our sensations, perceptions, memories. It is convenient to regard it as existing objectively on its own. But it certainly does not become manifest by its mere existence. Its becoming manifest is conditional on very special goings-on in very special parts of this very world, namely on certain events that happen in a brain. That is an inordinately peculiar kind of implication, which prompts the question: What particular properties distinguish these brain processes and enable them to produce the manifestation? Can we guess which material processes have this power, which not? Or simpler: What kind of material process is directly associated with consciousness?” (Schrödinger, 1958/2012)

Consciousness – not a scientific problem?

The problem of consciousness has emerged into the twilight of experimental research only over the last decades. In the 1980s some scientists began to relate neurobiological mechanisms of the brain to the experimental results of cognitive psychology (One of these pioneers belonged to the University of Tartu – Bachmann, 1984). Prior to that the “c-word” was banned from serious scientific discussions and, indeed, some resistance can be felt even up to this day. The main argument for not treating consciousness as a scientific question often went something along the following lines: “Consciousness is subjective, but science is objective; therefore, studying consciousness is a waste of time”.

Today not too many scientists hold this view, as it has been understood that: 1) it would be strange to study objects that are millions of light-years away or that are so tiny we cannot even see them under the best microscope, yet not to study consciousness; 2) science is about trying to figure out how to measure phenomena that were considered unmeasurable; 3) objective science can be done on conscious experience (in more technical terms, “The requirement that science be objective does not prevent us from getting an epistemically objective science of a domain that is ontologically subjective” (Searle, 1998)).

So how can one measure consciousness? It cannot get much simpler than that – one just has to ask the subject what he or she perceives!

Studying consciousness experimentally

Consider a typical experimental setup for studying consciousness: binocular rivalry. During binocular rivalry, different images are presented for the two eyes (such as with the help of glasses with polarized filters just like those that are now used to view 3D movies). Despite the fact that different stimuli are presented to each eye, the subject has at any given moment conscious experience of only one of them and the subjective experience changes during the viewing of these two stimuli, so that sometimes one image is perceived consciously and sometimes the other. Importantly, everything is objectively the same during the whole viewing epoch – the eyes “receive” both images all the time. What changes is the conscious experience: sometimes the content presented to the left eye and sometimes the one presented to the right eye is consciously perceived.

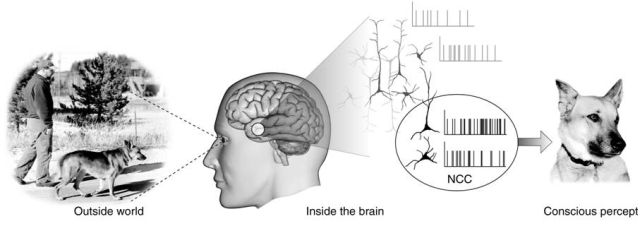

Such phenomena offer a neat way to study the neurobiological mechanisms of conscious experience: by dissecting objective stimulation (which stays the same all the time) from subjective perception (which changes), we can locate these neurobiological processes which go hand in hand with changes in subjective experience. For example, neurons early in the processing pathway react to both stimuli, not minding that one of them is not consciously perceived, but neurons higher up in the hierarchy are activated only when one or the other stimulus is consciously perceived.

The hard problem of consciousness

Using binocular rivalry and many other experimental paradigms, researchers have tried to find these neuronal mechanisms that underlie our conscious experience. Such research has been performed for two decades and a variety of experimental results have been produced. Many prominent theories about consciousness exist and some of them have made their way to neuroscience textbooks.

Yet, there is no consensus about how consciousness is produced in the brain. Some leading scientists are confident that consciousness is manifested in the activity of a neuronal global workspace, others feel that conscious perception requires long-range synchrony between different areas of the brain, and still others propose that consciousness is grounded on feedback activity sent from the higher cortical areas back to the lower ones.

There are three fundamental problems with all these theories. Firstly, the results supporting these theories are collected with measurement techniques that are not specific enough to reveal neural processes that underlie any conscious experience. A specific conscious experience (for example, the taste of a morning coffee) is presumably related to a spatio-temporal activation pattern encompassing millions of neurons, but we are currently not able to measure a neural ensemble like that.

Secondly, the majority of results supporting these theories are collected by contrasting conditions with and without conscious perception, but it has been argued that such a contrast is not specific enough to reveal only these neural processes that directly contribute to any conscious experience. Rather, such experimental contrast will also result in neural processes that precede and follow conscious perception.

Whereas these two first problems in principle can be solved and will be solved by having better measurement techniques and by creating more specific experimental contrasts – the third problem is more fundamental.

Let’s say in ten years we have found the neural correlates for the conscious experience of a morning coffee – neurons in areas X, Y and Z are firing in the pattern titi-taa-titi-taa-tum-tum-tum. Have we solved the problem of consciousness? Have we understood how consciousness emerges from the neurobiological processes? Most likely no. The third problem is that the brute fact of “the neural correlate for the experience of a morning coffee is areas XYZ firing in titi-taa…” does not explain why this neural activity pattern is accompanied by conscious experience (of the morning coffee). Indeed, why should any neural activity pattern be felt consciously from the first person perspective? This is the hard problem of consciousness.

Let’s say in ten years we have found the neural correlates for the conscious experience of a morning coffee – neurons in areas X, Y and Z are firing in the pattern titi-taa-titi-taa-tum-tum-tum. Have we solved the problem of consciousness? Have we understood how consciousness emerges from the neurobiological processes? Most likely no. The third problem is that the brute fact of “the neural correlate for the experience of a morning coffee is areas XYZ firing in titi-taa…” does not explain why this neural activity pattern is accompanied by conscious experience (of the morning coffee). Indeed, why should any neural activity pattern be felt consciously from the first person perspective? This is the hard problem of consciousness.

Information-integration theory of consciousness

It seems that the only sensible way to try to solve the third problem, the hard problem of consciousness, is to come up with a theoretical understanding of why the work of certain complex systems in this Universe is accompanied by conscious experience.

Giulio Tononi has argued that we could understand consciousness with the help of information theory. His information-integration theory of consciousness is based on two axioms: 1) consciousness is informative – each conscious experience rules out billions of other possible conscious experiences; 2) consciousness is integrated – each conscious experience is unified. These axioms led Tononi to propose that “consciousness corresponds to the capacity of the system to integrate information”. According to the theory, information integration is accompanied by consciousness just as an ion is accompanied by electric charge.

There is a lot of hype surrounding Tononi’s theory because such an information-theoretic framework could help quantify and therefore measure consciousness. And only by quantifying and measuring consciousness can we answer whether an octopus is conscious, whether my laptop or smartphone is conscious, whether human babies or baby dolphins are conscious, or whether the non-communicative patient lying in the bed is to some extent conscious.

Despite the high expectations, there are two problematic aspects of the theory. The first is practical: although in principle the information-theoretic framework makes consciousness quantifiable, it is not yet possible to measure this quantity for complex biological systems.

The second problem is more fundamental: how can we know if this theory is correct? How could we know if this theory is even a step in the right direction? The two axioms described above are very intuitive, but that is also their problem: they are nothing more than intuitions supported by thought experiments. The theory is founded on these intuitions, but if one of them is misleading (or one crucial intuition is missing), the whole information-theoretic formulation of the theory is simply wrong. And we all know that our intuitions can be erroneous – consider, for example, the long-held belief that the Earth is flat.

What’s next?

Tononi’s theory might be wrong, but his work shows that we indeed need to have a theory of consciousness that explains why certain brain states are associated with consciousness. Experimental results are necessary and they are required to have a good theory, but it is unlikely that any experimental result by itself can explain how conscious experience arises from neural activity. We need a theoretical understanding of how conscious experience fits with the fundamental laws of nature.

However, it might be that at the present point in time it is still too early to commit to any theory, because our experimental facts are simply not good enough to provide meaningful constraints for a good theory. But I guess this all belongs to the normal scientific progress – not having a theory, generating some experimental facts, coming up with an early theory that is most likely wrong, collecting better experimental results, refining the theory, gaining still cleaner experimental findings, and ultimately understanding the phenomenon in question.

Thus, although sometimes the problem of consciousness seems too big for a small man like me, I am not overly concerned – if our measurements and experiments get better and our theories more refined, we might indeed one day understand how the machine inside the skull generates conscious experience. Science for the win!

See also Jaan Aru’s Estonian-language blog about the problem of consciousness.